从 2019 年到现在,《捕蛇者说》 也走过了六个年头。我喜欢和有意思的人聊天,因此每次录制都让我非常享受。从热度上看,知识管理系列是无可争议的巅峰,也是我们唯一出圈的一次;有几期是则我个人很喜欢的,比如《Ep 08. 如何成为一名开源老司机》、《Ep 27. 聊聊焦虑》。

为什么专门写一篇博客?因为最近和 Hawstein 录的《Ep 56. 对话 Hawstein:从独立开发,到追寻人生的意义》,是六年来我最喜欢的一期。

六年前播客刚起步时,我还是一个入行不久的职场菜鸟,满心只想着怎么在业界站稳脚跟。六年后,工作于我已变成倦怠的日常,而对于人生的思考则在过去几年占据了我的脑海。《捕蛇者说》是一档以嘉宾访谈为主,围绕「编程、程序员、Python」展开的播客,我不打算改变它。然而,固定的形式和内容也成了一种限制,以至于我得去别的播客串台才能聊那些技术之外的想法和兴趣。结果就是,我脑中积压的想法越来越多,却无处表达。这听起来不可思议,但却是事实——我光是想写成文章的主题都积攒了一大堆,更何况那些零碎的思考。

所幸,Hawstein 的做客终于让我有了释放的渠道。之前在 Twitter 上约他上节目,一是感觉他表达欲望强烈,再有就是他回信里涉及的那些话题我也恰好都很感兴趣。不同以往,这次我们完全没有列提纲。然而我一点都不担心,因为我知道录出来的效果一定好——最后果然如此,甚至比我期待的更好。这里真的要感谢 Hawstein。可能这就是互相成就吧。

当然,本期因为没那么「技术」,几位老听众反馈说听不下去。这点我非常理解,并且在准备节目时就有心理准备。不过也有几位听众给予了非常积极的评价,还是令人开心的。不论怎样,我聊了我想聊的内容,也相信它给一些听众带去了启发和思考,已经无法奢求更多了。

本期的文字稿可以在这里找到。

今年是我开始认真对待投资的一年。几个月的实践下来,不谈具体操作,我意识到自己的整体思路有一些问题。因此用这篇文章总结一下。

我主要的问题是太过追求找到高 beta 的标的,却忽视了那些领头羊公司。比如,我今年从未投资过 Nvidia 和 Palantir,甚至从未把它们放进观察列表。很不可思议吧。即便我知道这波反弹它们肯定会涨,但却始终不肯看它们一眼。几个月下来,它们涨得并不比那些动辄一天 10% 20% 的小票少,波动却小得多。我花很多时间看各种小票,最后反而因为判断错误没有很好地利用资金,经常直接就止损了。

往后,我打算这样分配资金:

60% 投入 3 - 4 家高确定性、高成长的领头羊公司,持仓数月 - 数年:

- 如果重来一次,这一波我会选择 NVDA、VST、PLTR、HOOD,各15%

30% 投入 1 - 2 家短期内的热点题材,持仓数周:

- 过去几个月的热门题材有核能、航天、稀土、量子计算等,并不难找。

10% 投入当前炒作的 1 支妖股,持仓数天(当前没有就不买):

至于为什么不买标普500和纳斯达克100,因为 401k 里已经买了,所以主动投资就没必要重复。

这样分配的好处有几点:

- 提高了资金的利用率,避免持有太多现金(我的现金已经放在股票账户之外了)

- 缩小选股范围:买一个标的前先问自己:是龙头吗?是热点题材吗?正在被炒作吗?这样一下就能删掉 90% 的股票

- 节省时间——我不用再去每天找各种机会,只要热点来了买入即可

- 省心——由于领头羊股票占比 60%,降低了仓位的整体波动

当然,短期内美股已经太高,我目前不会上太大仓位。等之后有机会就开始实践。

之后可能会在博客里写一些投资相关的文章。先从这篇开始,我想列举几个初入投资容易犯的错误,其中的坑我也踩过。

选错了市场

正如游戏里不会让你一上来就挑战最终 boss,初入投资最好不要挑战高难度市场。这里不得不提的就是A股,一个典型的地狱难度市场,具体可参考《为什么A股是价值投资者的坟墓?》。

我当然不是说投A股一定亏钱,或者投美股一定赚钱(少部分人在A股赚钱或许更容易)。关键在于,如果处在一个不正常的市场,你将无法通过反馈机制纠正错误并逐渐成长(关于「反馈」,我未来还会写文章)。比如,我看到不少人抱着赚钱致富的心态进入A股,很快便遭受打击退出了市场,并从此认定「投资都是骗钱的玩意,不投资就是最好的投资」。选错市场,会让人对投资这件事本身失去信心,而这是最可怕的一点。

一开始就投入大量资金

这可能是新手最常犯的错误,包括我自己。

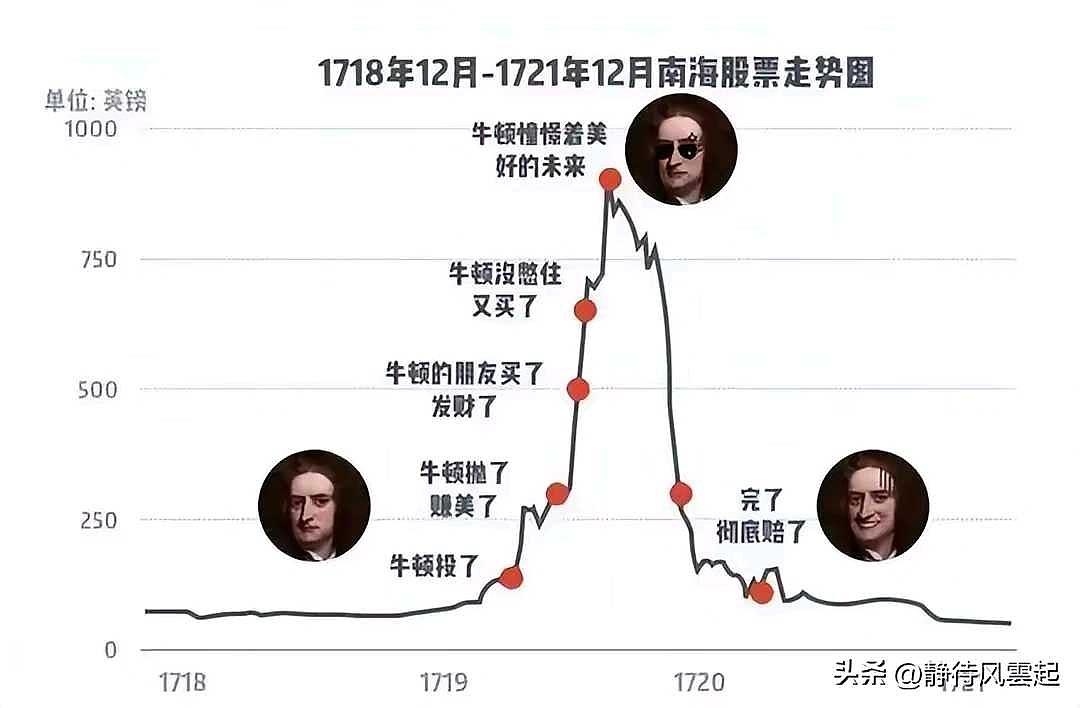

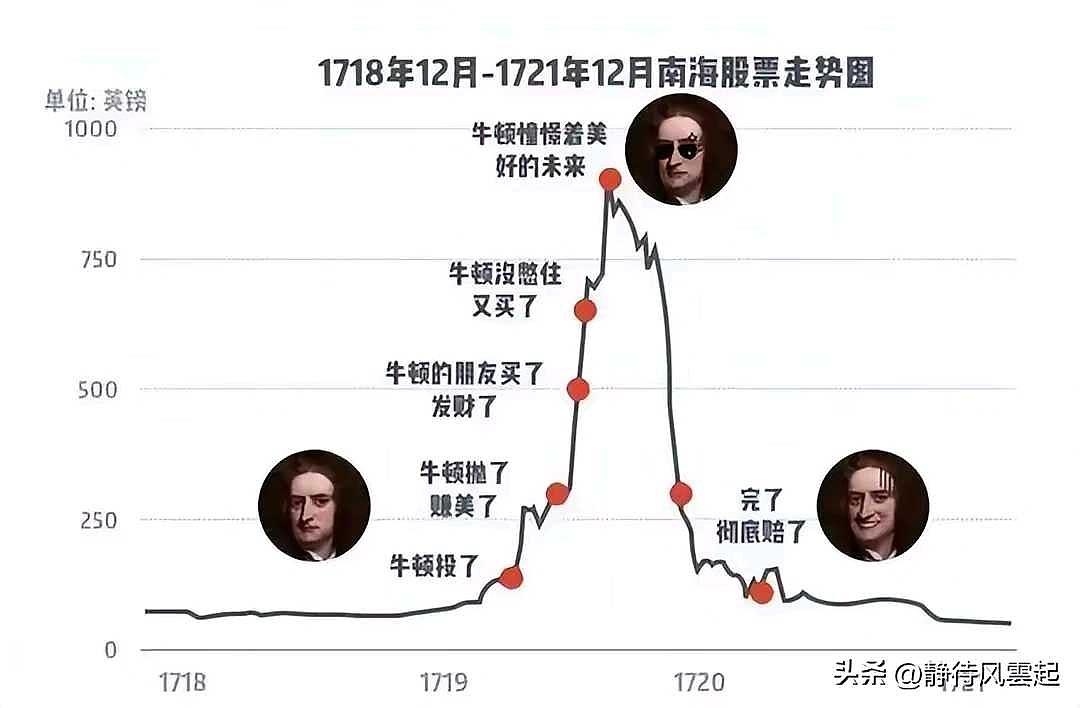

除了极少数天赋异禀的选手,基本上人们刚开始投资时都会亏钱,不论身处哪个市场。其中原理也很简单:大部分人开始投资,都是因为市场或者某个标的已经热到不能再热了。当身边的人都在赚钱却只有你被落下,你能不急吗?当年的牛顿就是这样:

结果呢?你入市的时候已经是牛市末期。很快,这支股票或者市场就崩盘了。

既然开始时亏钱是自然规律,与其违抗它,不如想办法减小损失。后面会说怎么做。

在各种投资风格之间横跳

提到「投资交易」,有人会说不就是低买高卖吗?然而这种过度简化是有害的,因为它忽略了投资交易有的不同风格,且它们之间的差异极大,甚至完全相反。比如,我们最常听到的「价值投资」流派,要求投资者花费大量时间去彻底搞懂一家公司,而超短线日内交易往往不在乎基本面。你可以做多或者做空,左侧交易或者右侧交易,买入或者卖出各种期权;你可以买大盘、行业 ETF、个股,甚至加密货币,等等等等,不一而足。

在任意一个时间点,所有选项对投资者来说都是开放的。选项多固然好,却也让投资者陷入了选择困难症。同时,社交媒体上一会是这个人买A的期权翻了十倍,一会是这个人投资B五天翻倍。这些选项和声音会让新手们无比迷茫和焦虑,于是开始浏览大量的消息,生怕错过什么投资机会。看到好消息便马上买入,听闻负面消息便马上做空,要么就是跟单各种大V和「老师」。长此以往,不论赚钱与否,他们生活都将被市场裹挟,惶惶不可终日。

我很喜欢 Dawei 在《Ep 53. AI 能否帮我们做出更好的投资决策? - 捕蛇者说》里的观点:「如果投资不能让我们的生活变得更好,那它就没有意义」。我认为,不让自己被市场裹挟的核心在于确立自己的交易风格。每种交易模式都有优缺点,但相同点在于都可以赚钱。这就和编程语言一样,你既不可能,也没有必要精通所有的编程语言。反之,你的上限取决于你最擅长的那种交易模式(编程语言)。人的时间是有限的,必须要减小决策的解空间。这样不仅可以提高决策效率,更可以专注于精进特定的交易模式。

那么,如何开始?

这个问题没有标准答案,但我想给点框架性的建议。

Step 1. 假设你手头有 10w 块钱想投资。你要做的第一件事,就是把这部分钱除以十,也就是拿出一万去投资,把剩下的九万放在银行里。这样即便你亏了一半,也就是五千块而已,并不伤筋动骨。

Step 2. 花费三到六个月,随心所欲且尽可能多地尝试各种投资方法。这里的关注点不在于每种方法是否能赚钱,而在于仔细体察自己对于每种操作的感受,如有需要可以记录下来。举几个例子:

- 个股亏了 10%,我当时的心情是怎样的,是否可以平静地止损?

- 期权归零了,我是否想自杀?

- 买标普500 一个月涨了 2%,我是否嫌涨得太慢?

这样,你将建立一个量表,去量化自己对每一种投资方式的适应程度。而适应程度,决定了你未来应该采取的投资方式。只有当投资方式符合自己性格的时候,你才真正有可能把它执行好。相信我,没有人在实践之前真正了解自己。你自以为的了解,多半是幻觉。

Step 3. 当你找到了自己最舒服的 1-2 种投资模式,把它们固定下来,在往后的若干年里不再考虑其它的投资方式。同时,找到讲解这种特定投资模式的书,研读它们。就这样开始吧。重复地练习加上前人的经验,一定会让你进步飞快。

我自己也是新手,还在学习的路上,本文也算是阶段性总结,希望对你有帮助。限于篇幅,很多地方只能点到为止,之后想到了再展开写。